While philosophers seem to thrive on conflict and would really have nothing to say at all without substantial disagreements, they are remarkably consistent on how to respond to death, dying, and loss. Most recently, I have turned to the work of Al-Kindi , who lived  from about 801 to 866 in Baghdad, for advice on how to respond to grief. Al-Kindi gives us the example of the mother of Alexander the Great.

from about 801 to 866 in Baghdad, for advice on how to respond to grief. Al-Kindi gives us the example of the mother of Alexander the Great.

As his death approached, Alexander wrote to his mother to prepare her for the loss of her child. As Al-Kindi tells it, Alexander said, “Do not be content with having the character of the petty mother of kings: order the construction of a magnificent city when you receive the news [of the death] of Alexander!” Everyone in Africa, Europe, and Asia should be invited to a great celebration of his life with one proviso, that anyone struck my similar misfortune should not come. After his death, his mother was mystified that no one obeyed and attended the funeral until someone pointed out to her that no one had ever escaped the type of misfortune she was experiencing and those with similar losses were told not to come.

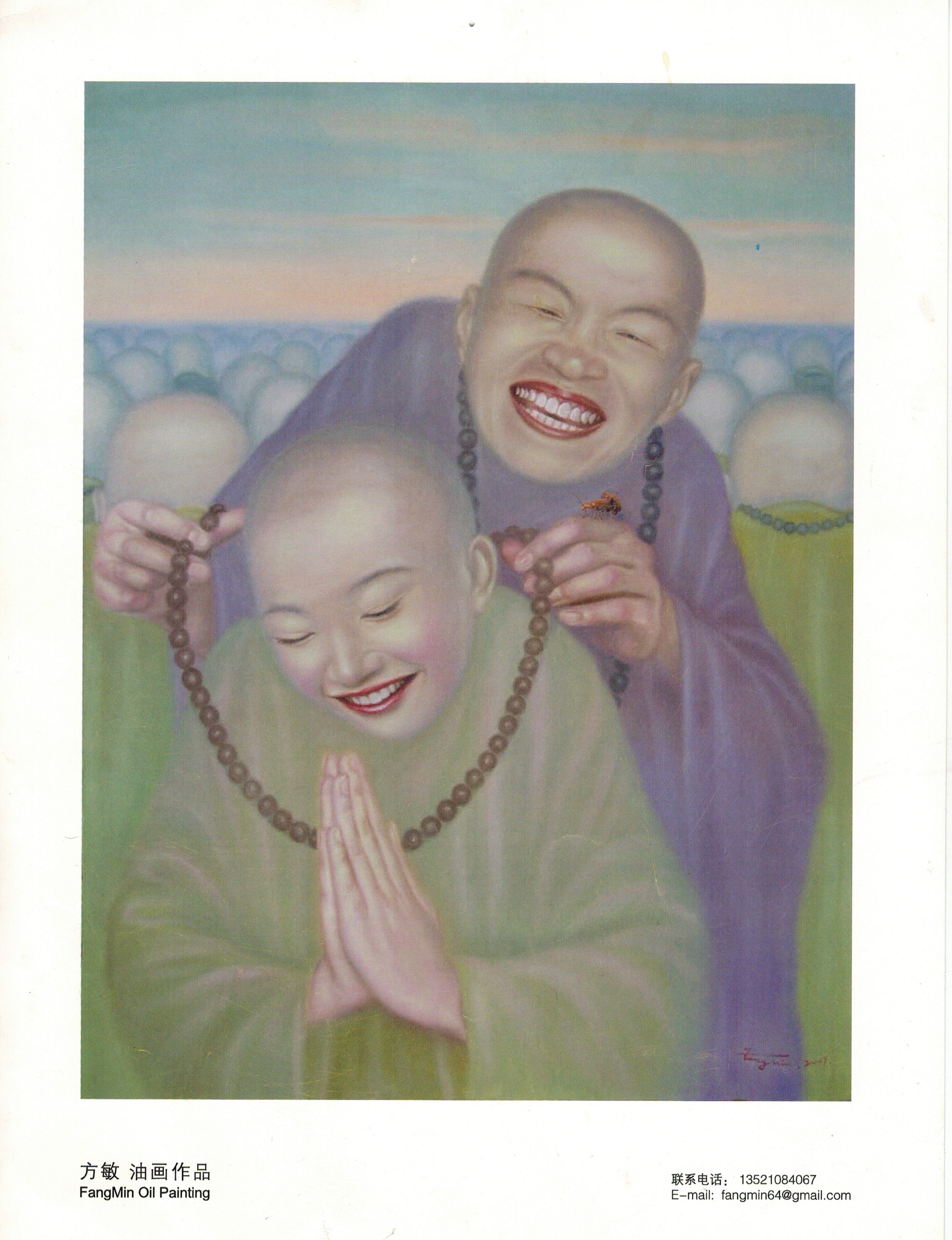

Al-Kindi says Alexander’s mother exclaimed, “O, Alexander! How much your end resembles your beginning! You had wanted to console me in the perfect way for the misfortune of your death.” This story of consolation is similar to the Buddhist parable of  Kisa Gotami who lost her young son and was advised by the Buddha to collect a mustard seed from every family that had not lost a close relative. Of course, she was unable to find any family that had not faced loss, so she realized her suffering was universal and took comfort in the teachings of Buddhism.

Kisa Gotami who lost her young son and was advised by the Buddha to collect a mustard seed from every family that had not lost a close relative. Of course, she was unable to find any family that had not faced loss, so she realized her suffering was universal and took comfort in the teachings of Buddhism.

German philosopher Arthur Schopenhauer, himself influenced by Buddhist texts, also points us to the suffering of others for comfort: “The most effective consolation in every misfortune and every affliction is to observe others who are more  unfortunate than we, and everyone can do this. But what does that say for the condition of the whole?” Indeed, the suffering of others may make us feel petty for our complaints, but it does little to relieve our pessimism about life. But maybe we just cling to life too tenaciously.

unfortunate than we, and everyone can do this. But what does that say for the condition of the whole?” Indeed, the suffering of others may make us feel petty for our complaints, but it does little to relieve our pessimism about life. But maybe we just cling to life too tenaciously.

Al-Kindi tells us that all our possessions are only on loan to us and that “the Lender has the right to take back what He loaned and to do so by the hand of whomever he wants.” He says we should not see our loss as a sign of disgrace; rather, “the shame and disgrace for us is to feel sad whenever the loans are taken back.” He is speaking of possessions in this instance, not of children, but I’ve heard many people say that our children are only “on loan” from God, who can call them home at any moment. I personally have never found any comfort in this, and I wonder whether anyone has ever felt the brunt of loss softened by the thought of a merciful God calling in His loans.

No matter what happens, Al-Kindi tells us we should never be sad, as sadness is not necessary and “whatever is not necessary, the rational person should neither think about nor act on, especially if it is harmful or painful.” Many philosophers echo this sentiment. We should trust that God has created the world that is perfect according to God’s design; therefore, we should accept the vicissitudes of life with equanimity. This advice is almost universally dispensed and almost universally not followed for a simple reason: sadness is really an involuntary reaction to loss and pain.

Al-Kindi tells us the death is not an evil, because if there were no death, there would be no people. By extension, if what is thought to be the greatest evil, death, is not evil, then anything thought to be less evil than death is also not evil. As such, we have no evil to fear in our lives. From these assertions, Al-Kindi claims that we bring sorrow to ourselves of our own will. A rational person would not choose such a form of self-harm, so depression and mourning can be controlled through the proper exercise of reason.

Most ancient philosophers, and many contemporary ones, will tell us that letting our rational nature rule our emotional nature will ease our pain in the face of loss. Certainly, a rational examination of death, life, and loss helps us to make sense of our suffering, but it does not eliminate suffering. In fact, if you see grief as a moral failing, which many thinkers have said it is, I believe your suffering is compounded. Grief, hard enough to bear on its own, becomes a catalyst for an explosion of guilt and shame.

While it is important to examine the causes of our suffering and explore what meaning loss brings to our lives, denying the necessity of grief is as useless as denying the necessity of breathing. While I can accept that Al-Kindi’s description of death is accurate, it only helps me come to terms with the prospect of losing my own life. For each of us, our own death brings a promise of relief, but the death of our loved ones only brings relief when they are so burdened by suffering that we can no longer bear to see life oppressing them.

Death is still an evil, because it robs me of the people that make my life meaningful. It threatens to rob me of the people, indeed, who may make my life bearable. It is possible to imagine that death is not an evil, but, more importantly, we must recognize that love is certainly a good, and to lose those we love is an excellent reason to mourn. Mourn freely, I say, without guilt and without shame.